Update: I’ve written a new HDRI tutorial that covers some more advanced stuff here:

http://blog.hdrihaven.com/how-to-create-high-quality-hdri/

It’s aimed a bit more to professional HDRI creators and assumes you already know the basics, so if you’re completely new to HDRIs you should read this post first :)

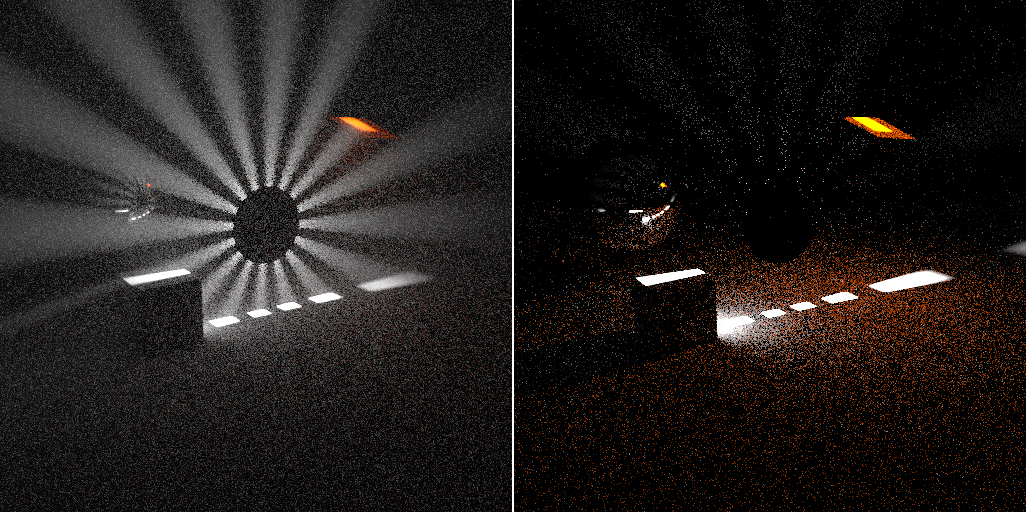

An HDRI. Download it here.

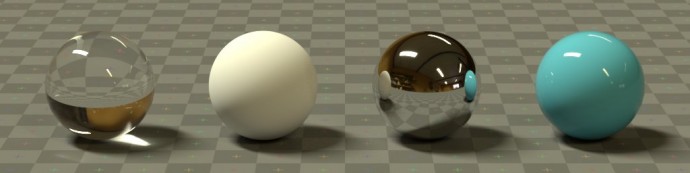

There is no easier or quicker way to light a CG scene than to use an HDRI. They are essentially snapshots of the real world that contain exquisitely detailed lighting information, which can transport your bland CG objects into realistic virtual environments.

Not only do they provide accurate lighting, but they can be seen in the background and in reflections, which makes them all the more immersive.

Creating a high quality HDRI from scratch is quite a complicated task that requires very specific equipment and a meticulous workflow. One mistake like using the wrong focal length or choosing a slow shutter speed can mean all your time has been wasted and you’ll have to start all over again.

I’ve been making HDRIs for a couple of years now, so I hope I can save you some time and experimentation. This is by no means the only way to make an HDRI, but it is a good introduction to the process.

By the end of this tutorial, you’ll have made your very own 360º HDR environment map that can be used to light a 3D scene.

Buckle your seat belts boys and girls, because this is gonna be a long one!